Intro

There's a scene in The Wire that's alway stuck with me.

Two detectives show up to a crime scene—one seasoned, one new. The veteran gives the rookie a piece of advice:

"You know what you need at a crime scene? Soft eyes. You got soft eyes, you can see the whole thing. You got hard eyes, you stand at the same tree, missing the forest."

That idea—soft eyes—has shown up over and over in my career.

Most gnarly bugs aren’t actually complex. They’re simple… just well-hidden. And the fastest way to stay stuck is to fixate too narrowly on what you think the problem is.

Which brings me to the next chapter in building an AI test debugger.

Recap

Here's a quick recap from last time:

a teammate wrote e2e tests that passed locally but failed in CI

instead of debugging directly, I built an agent loop to reason about the failures

it didn't work, until it sort of did

the unlock was providing more context to the coding agent in the form of a contract (about how the test suite worked & anti-patterns to avoid) along with a repair prompt (explaining how to use the contract and the details retrieved from the CI test failures)

Next Step: Closing The Loop

The solution I had was a good start but had some rough edges.

Up until now I was manually:

running the tool

collecting output

feeding it into the coding agent

So I wrapped everything into a command (e.g., /my-command) so it could be invoked by myself or the coding agent.

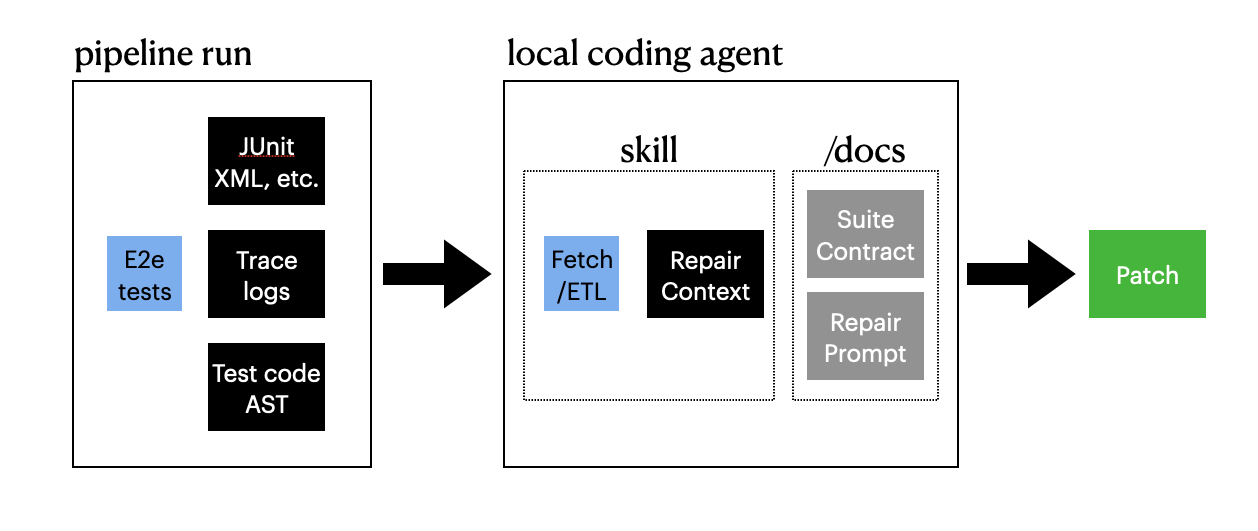

The flow now looked like this:

It enabled me to iterate faster over a loop of

agent proposes a fix

run it in CI

if failure, then

repeat

After a few rounds, the agent stopped suggesting code changes. Instead it said:

"This might not be a test issue. It might be the CI environment."

The Call Was Coming From Inside The House

The agent was correct. But to find the smoking gun required a healthy dose of 1) patience, and 2) soft eyes.

Patience

Patience was required because I had limited access and control in the CI runtime environment, and it was not configured for friendly debugging. E.g., I would gain access to the machine and then it would shut down within 5 minutes of its coming online. I could see the setting to change this but did not have access to do so.

Soft Eyes

Soft eyes was required because there were seductive clues which turned out to be red herrings. Like the fact that there was the potential for system state to be shared between CI runs.

In the end I got my access issues sorted, surveyed the crime scene, and found all of the relevant clues. So what was the issue?

Case Closed

For this Scooby Doo reveal we'll need to revisit one key detail from the initial discovery of this issue: there were screenshot artifacts from the test runs and one of them was blank.

I remember being confused by this, looking into it, and finding evidence suggesting that this was a side-effect of our architecture (e.g., we're using Playwright to test an application built on top of a dated version of Electron).

I simply thought this was a known limitation. Just noise.

It turns out it wasn't.

The blank screenshot was actually showing a failure condition in the application!

In the application under test the user does the following:

performs an action which generates a report

the user opens the report by clicking a button in the application

the report (and this is the important bit) gets opened in a web page outside of the app

So what browser was used? The system default.

And what was the system default browser on this self managed node?

Internet Explorer!

Does our application's report output work in Internet Explorer? No! And it's not supported either.

The Fix Is In

In the end the fix wasn't a code change. It was changing the system default browser.

The agent didn’t solve the problem directly. It forced me to zoom out.

And the answer was there the whole time. I just needed soft eyes to see it.